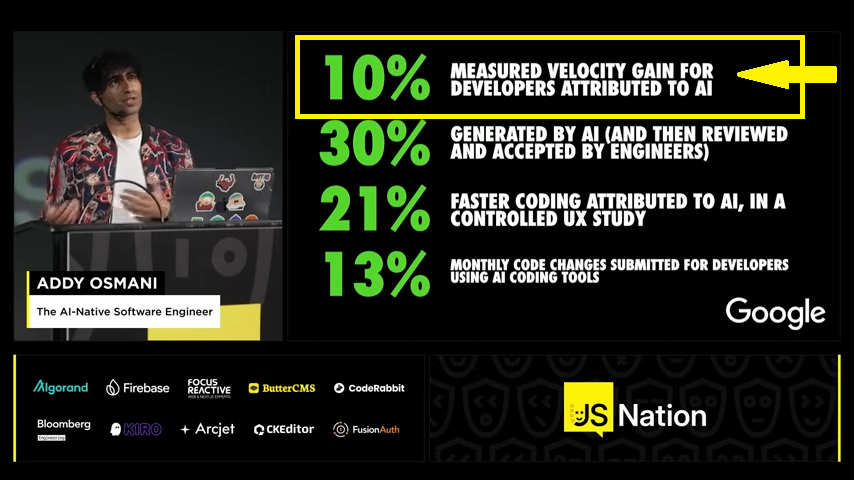

The AI-Native Software Engineer | Addy Osmani

Google development leader describes how the role of Software Engineer is changing in the AI era, and how AI is transforming Devops practices.

Addy Osmani, a prominent engineering leader at Google (formerly leading Chrome Developer Experience and now involved with Google Cloud AI and tools like Gemini), delivered an insightful talk titled “The AI-Native Software Engineer”.

Addy Osmani, a prominent engineering leader at Google (formerly leading Chrome Developer Experience and now involved with Google Cloud AI and tools like Gemini), delivered an insightful talk titled “The AI-Native Software Engineer”.

Presented JSNation US 2025 the talk addresses a pivotal shift in software engineering amid rapid AI advancements in 2025 and beyond.

Osmani opens by posing a provocative question: Why are developers coding faster than ever before, yet shipping slower?

He attributes this paradox to the naive integration of AI tools—such as Cursor, GitHub Copilot, or Gemini—without evolving workflows, leading to more code but persistent bottlenecks in quality, review, deployment, and reliability.

Defining the AI-Native Software Engineer

At the core of the talk is the concept of an AI-native software engineer: someone who treats AI not as a replacement or mere assistant, but as a deep partner in their daily workflow. This mindset flips the common fear—”Will AI replace me?”—to a proactive question: “How can AI help me do this faster, better, or differently?”

Osmani emphasizes that AI amplifies human capabilities, particularly for experienced engineers. Seniors can “context-engineer” prompts effectively, yielding results comparable to peer-level input. He stresses that being AI-native is temporal—today’s practices will evolve rapidly—so continuous learning remains essential.

In the future, “AI-native” may simply become synonymous with “software engineer,” much like searching the web or using Stack Overflow became standard.

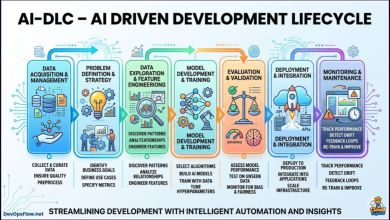

How AI Accelerates DevOps Flow Velocity

Addy highlights a nuanced reality about AI’s impact on developer velocity (the speed of producing and shipping code).

While popular narratives often hype dramatic multipliers like 2x, 5x, or even 10x productivity gains from tools such as Cursor, GitHub Copilot, or Gemini, Osmani grounds the discussion in more measured observations—drawing from Google’s internal data, industry studies, and real-world developer experiences.

He points out that many teams and individual engineers experience relatively modest improvements when AI is adopted naively or without refined workflows. In particular, he references scenarios where AI boosts raw coding speed but delivers only incremental gains overall—often in the range of around 10% (or sometimes cited as 10-13% in related analyses of median outcomes).

This figure aligns with controlled studies and enterprise benchmarks showing that basic AI usage (e.g., autocomplete-style features) typically yields 10-30% productivity uplifts, far short of the “10x” claims that circulate in hype cycles.

Why the Gains Are Often “Only” Around 10%

Osmani explains this tempered impact through several interconnected factors:

- The “70% Trap” (or “Last Mile” Problem): AI excels at the initial 70% of a task—generating boilerplate, drafting functions, suggesting patterns, or handling repetitive logic. It accelerates this “inner loop” of coding dramatically. However, the remaining 30%—handling edge cases, ensuring security, observability, performance tuning, integration with legacy systems, and achieving production-grade reliability—requires deep human judgment, context, and verification. Without addressing this gap, the overall velocity boost flattens. Engineers end up spending extra time fixing AI hallucinations, subtle bugs, or misaligned implementations, which can offset much of the early speed gain. The result: faster code writing, but not necessarily faster, higher-quality shipping.

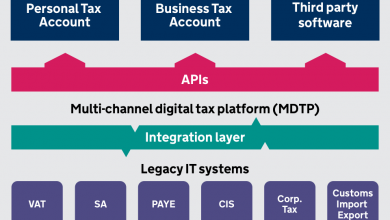

- Bottlenecks Shift Downstream: Even with faster code generation, other parts of the software development lifecycle (SDLC) become chokepoints. Osmani cites Google’s internal metrics showing pull request (PR) review times exploding (e.g., by ~91% in some cases) as teams grapple with higher volumes of AI-generated changes. Code reviews, testing, debugging, deployment, and compliance checks don’t accelerate at the same rate, leading to a net velocity improvement that feels underwhelming—often closer to 10% end-to-end when measured across the full cycle.

- Naive vs. Optimized Usage: Many developers treat AI as a simple accelerator without evolving their processes. Juniors might see bigger relative gains (as AI feels “magical” for learning and boilerplate), but seniors often report more modest uplifts unless they invest in “context-engineering”—crafting rich prompts, maintaining clean codebases for AI to reason over, and orchestrating agentic workflows. When adoption stays superficial (e.g., just tab-completing lines), the velocity bump hovers in the lower double digits. In contrast, disciplined AI-native practices can push toward 2x–5x (or higher) on specific tasks, though Osmani cautions that true 10x claims are rare and context-dependent.

- Amplification of Existing Practices: AI is a multiplier, not a magic wand. It rewards strong fundamentals (clear specs, modular design, good tests) and punishes weaknesses (poor architecture, technical debt). Teams or individuals with solid habits see compounding benefits, while others amplify chaos—leading to the modest 10%-ish net gain in many real-world rollouts.

Moving Beyond the 10% Plateau

Osmani doesn’t dismiss AI’s value; he frames this modest baseline as a starting point, not a ceiling. His playbook urges engineers to escape the trap by:

- Shifting from “code writer” to “orchestrator” — directing AI agents across the full lifecycle (design, testing, ops).

- Embracing “trust, but verify” — rigorous reviews, automated testing, and human oversight to close the last 30%.

- Adopting habits like trio programming (senior + junior + AI) to balance velocity with skill-building and quality.

- Measuring true end-to-end velocity (not just lines of code or commits) and iterating on workflows.

In essence, the ~10% figure Osmani references serves as a realistic wake-up call: AI tools do improve velocity meaningfully, but naive integration often yields incremental rather than revolutionary gains.

The real transformation comes from becoming truly AI-native—restructuring how work gets done to capture far greater amplification. As he concludes optimistically, engineers who master this shift don’t just code faster; they build better systems, learn continuously, and tackle bigger challenges—turning that initial 10% into sustained, compounding advantage in an AI-augmented era.