Keynote

The State of AI Code Quality: Hype vs Reality — Itamar Friedman, Qodo

AI is making code generation nearly effortless, but the critical question remains: can we trust AI-generated code for software that truly matters? Has it really become easier to build robust, high-quality systems?

In this talk, Itamar Friedman examines the gap between the hype around AI-powered code generation and the practical realities of maintaining high-quality, trustworthy software in the AI era.

In this talk, Itamar Friedman examines the gap between the hype around AI-powered code generation and the practical realities of maintaining high-quality, trustworthy software in the AI era.

He draws heavily on Qodo’s State of AI Code Quality report and related industry data to highlight both progress and persistent challenges.

The report is based on a survey of 609 developers across various company sizes and regions. It highlights the tension between rapid AI adoption/productivity gains and persistent challenges around trust, context awareness, and sustainable code quality.

Key Points:

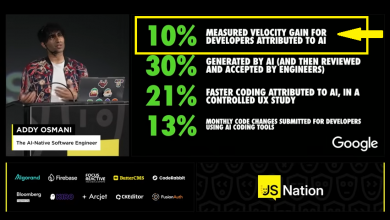

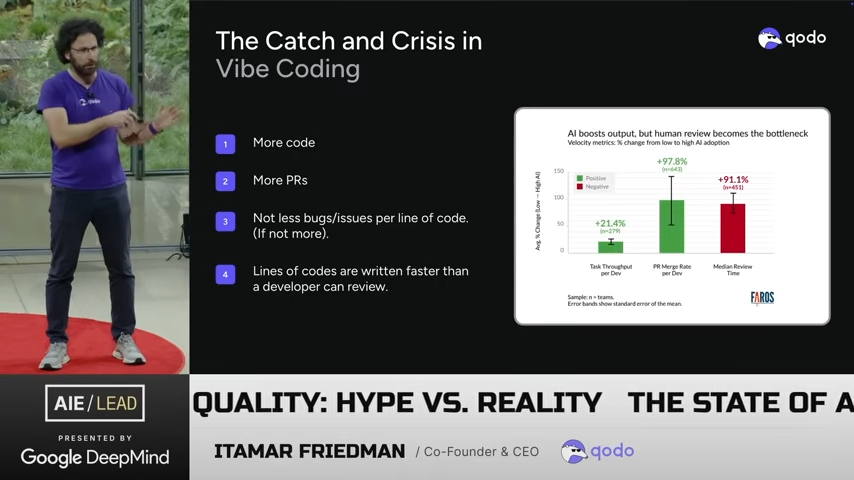

- The Quality Crisis Amid Productivity Gains: AI tools have made code generation much faster—65% of developers report that at least a quarter of their code is AI-influenced, and many experience significant productivity boosts. However, this comes with downsides: 67% of developers have serious concerns about code quality, 91% more time is spent on pull request (PR) reviews, 42% of developer time is wasted fixing bugs and technical debt, and there are reports of 3x more security incidents in AI-assisted workflows. Friedman questions whether recent major cloud outages could be linked to rushed AI-generated code.

- Trust and Context Issues: A major finding is that 76-88% of developers do not fully trust AI-generated code, primarily due to poor context awareness leading to hallucinations and errors. Friedman stresses that “context is the foundation of quality,” advocating for tools that incorporate not just the codebase but also version history, PR comments, documentation, logs, and organizational knowledge.

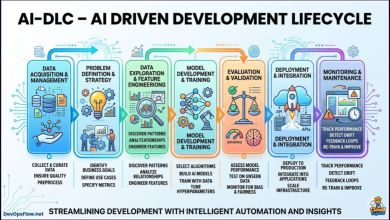

- Breaking the Glass Ceiling: Simple autocomplete-style code generation hits limits. To achieve real gains, organizations need to invest in:

- Agentic code generation for more complex tasks.

- Agentic quality workflows that automate checks outside the IDE.

- Learning systems that dynamically adapt quality standards to the evolving codebase.

- AI as Gateway and Gatekeeper: Friedman positions AI not just as a generator but as an automated reviewer and quality enforcer. AI can flag high-severity issues in ~17% of PRs, enforce custom rules (e.g., avoiding nested if-statements), generate tests, and create living documentation, potentially leading to outcomes like 85% fewer security vulnerabilities and 3x better test coverage. He discusses quality at both code level (functional/non-functional) and process level (guardrails, verification, standards).

- Future Outlook: The vision is for Gen-3.5+ development with context-aware, multi-agent systems handling specs, tests, and implementation in parallel. Quality becomes a competitive advantage for long-term velocity, but AI remains a tool requiring rigorous frameworks, human oversight, and investment in context and automated gates.

The talk balances realism—acknowledging hype and current shortcomings—with optimism about AI-driven improvements in code review, testing, and standards enforcement when properly implemented.